Extract, Transform, Load (ETL)

Empower your organization with a robust ETL platform engineered to handle complex workflows with unparalleled speed, scalability, and reliability.

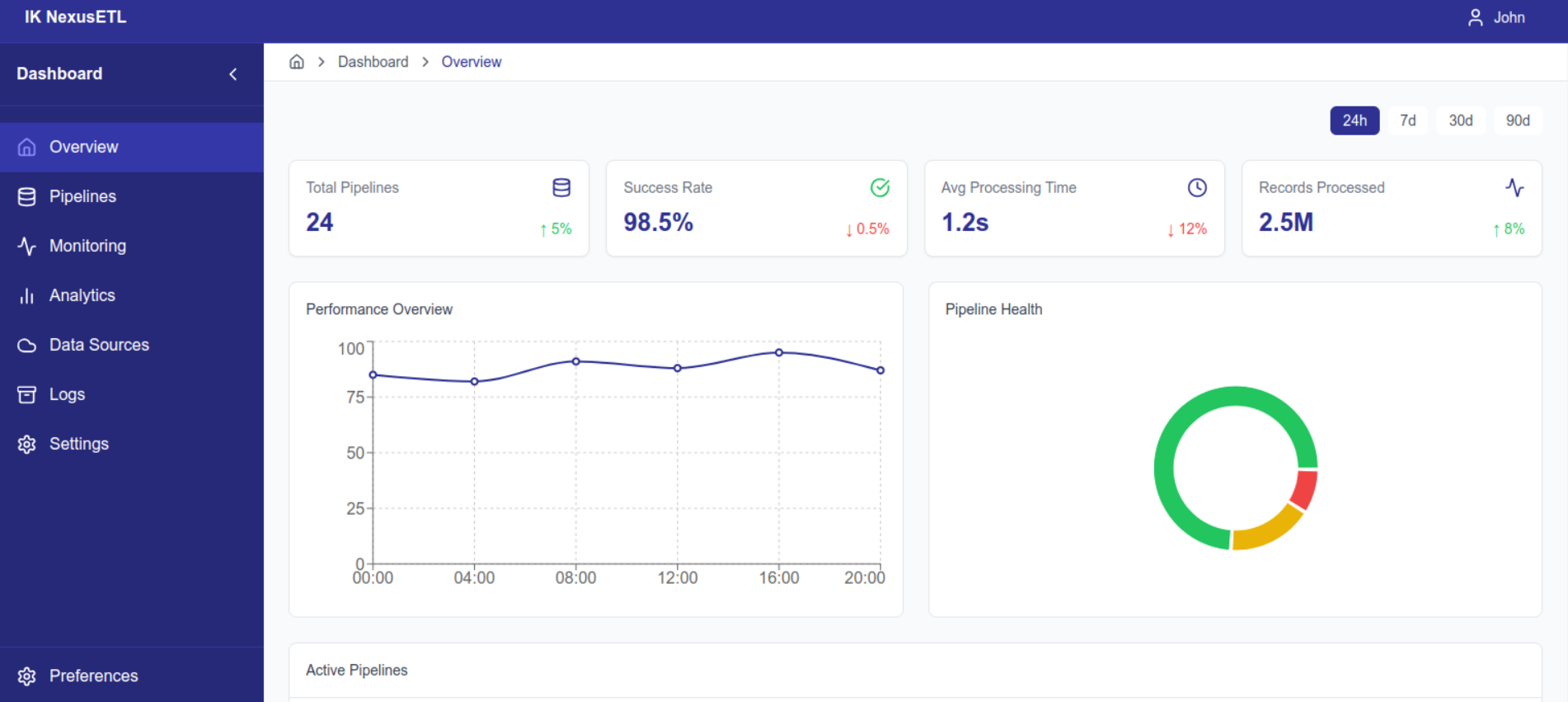

Overview

ETL (Extract, Transform, Load) processes are the backbone of modern data engineering, enabling organizations to collect, clean, and consolidate data from disparate sources for analytics and decision-making. In an era dominated by big data, IoT, and real-time analytics, our ETL platform is engineered to handle the most complex workflows with unparalleled speed, scalability, and reliability.

Key Features

High-Performance Data Ingestion

Supports batch and real-time streaming from multiple data sources, including relational databases, APIs, cloud storage, and IoT devices.

Advanced Transformation Capabilities

Enable data wrangling, cleansing, and enrichment with AI-powered rules, custom transformation logic, and metadata-driven pipelines.

CDC (Change Data Capture)

Capture incremental changes with schema drift management to ensure consistency across datasets in real time.

Scalable Infrastructure

Built to handle petabyte-scale workloads with distributed processing and parallel computation frameworks like Apache Spark.

Integration-Ready

Seamless connectors for Snowflake, Redshift, BigQuery, and Azure Synapse, supporting hybrid and multi-cloud ecosystems.

Business Benefits

Empower Data Teams

Empower data analysts and scientists with analytics-ready datasets for faster insights.

Minimize Latency

Minimize latency in data pipelines with real-time data streaming and CDC integration.

Reduce Development Time

Reduce development time and costs with reusable ETL templates and self-healing pipelines.

Technology Highlights

The platform leverages event-driven architectures and supports advanced orchestration tools like Apache Airflow and Prefect. It integrates with leading CI/CD pipelines, ensuring continuous delivery of optimized workflows.